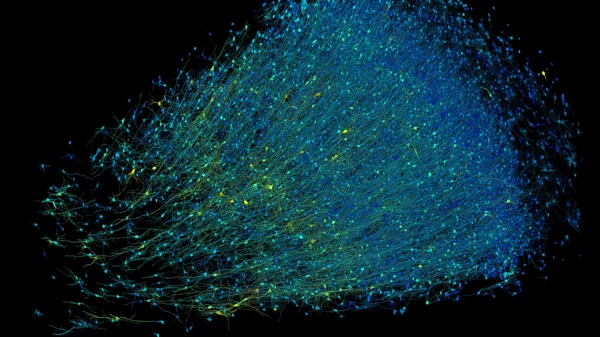

Newly obtained documents by WIRED reveal that AI surveillance software was used to monitor the movements, behavior, and body language of thousands of London Underground passengers. The software, combined with live CCTV footage, aimed to detect potential crimes or unsafe situations, such as aggressive behavior or the presence of weapons.

Between October 2022 and September 2023, Transport for London (TfL) conducted a trial at Willesden Green Tube station, testing 11 algorithms to monitor passenger behavior. This trial marked the first time TfL combined AI with live video footage to generate alerts for frontline staff. Over 44,000 alerts were issued during the trial period, with 19,000 being delivered to station staff in real time.

The trial targeted not only safety incidents but also criminal and antisocial behavior. AI models were used to detect various activities, including the use of wheelchairs, prams, vaping, and unauthorized access to restricted areas. However, the documents also revealed errors made by the AI system, such as flagging children as potential fare dodgers and difficulty distinguishing between different types of bikes.

Privacy experts raised concerns about the accuracy of the AI algorithms and the potential expansion of surveillance systems in the future. While the trial did not involve facial recognition, the use of AI to analyze behaviors and body language raised ethical questions similar to those posed by facial recognition technologies.

In response to inquiries, TfL stated that existing CCTV images and AI algorithms were used to detect behavior patterns and provide insights to station staff. They emphasized that the trial data was in line with data policies and that there was no evidence of bias in the collected data.

Moving forward, TfL is considering a second phase of the trial but stated that any wider rollout of the technology would depend on consultation with local communities and stakeholders.

Overall, the trial demonstrated the potential of AI surveillance in public spaces but also highlighted challenges and ethical considerations associated with its use. As the use of AI in public spaces continues to grow, it’s essential to ensure transparency, oversight, and public consultation to maintain trust and address concerns about privacy and surveillance.